Casci—Understanding photography

Problem

Most of the people nowadays own a camera without even understanding the basic functions. This is mostly because the cameras are not self-explanatory and therefore only the automatic mode is used. As a result the individuality, awareness and creativity get lost.

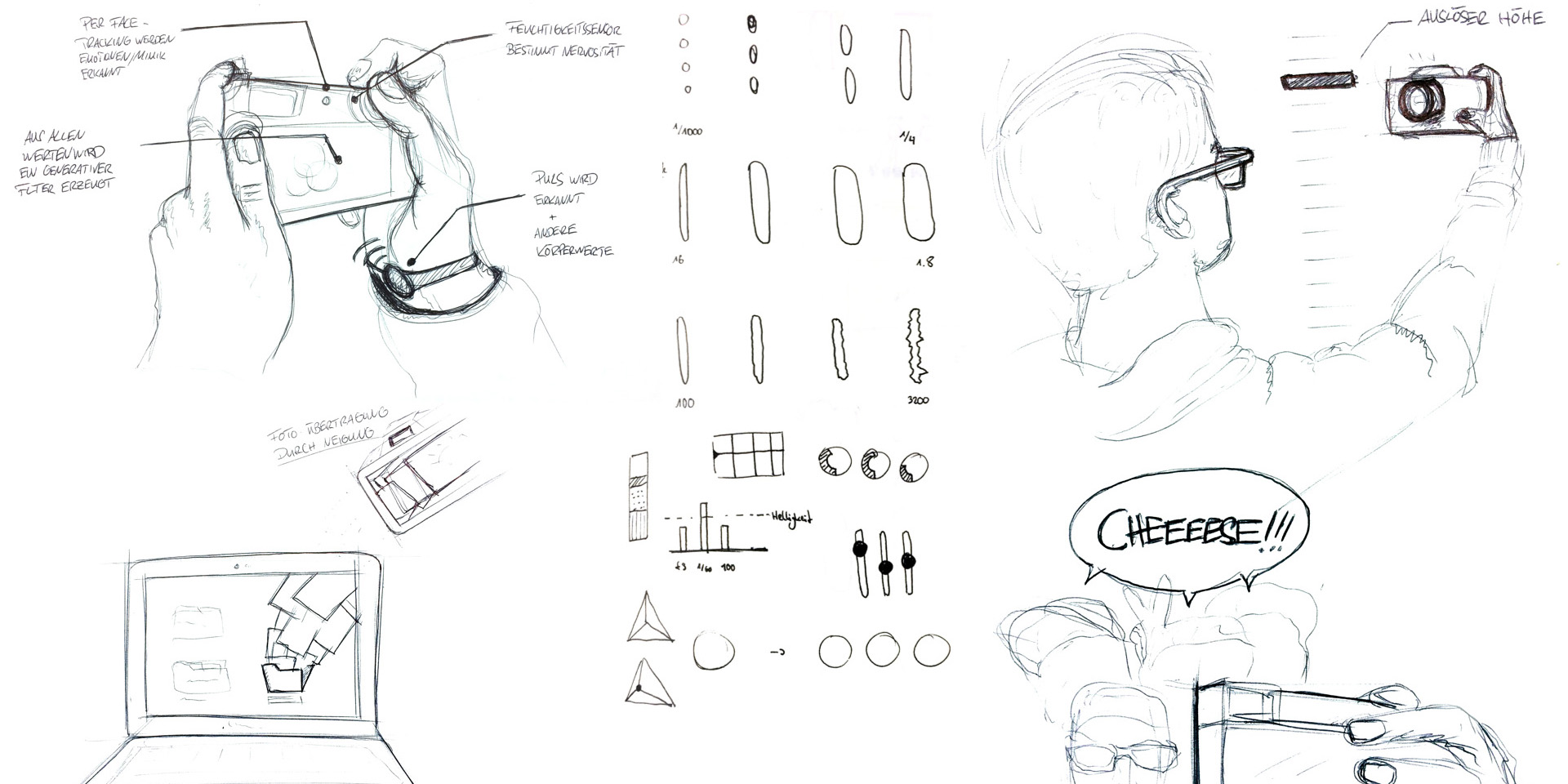

Ideation

How can a camera behave more like a human? By using the methods Random Input and Collaborative Sketching, we generated many ideas, how a camera could be more intuitive. Beside of visualizing the main parameters (aperture, exposure and ISO) we also focussed on an experimental, sensory approach on the topic of photography.

Final concept

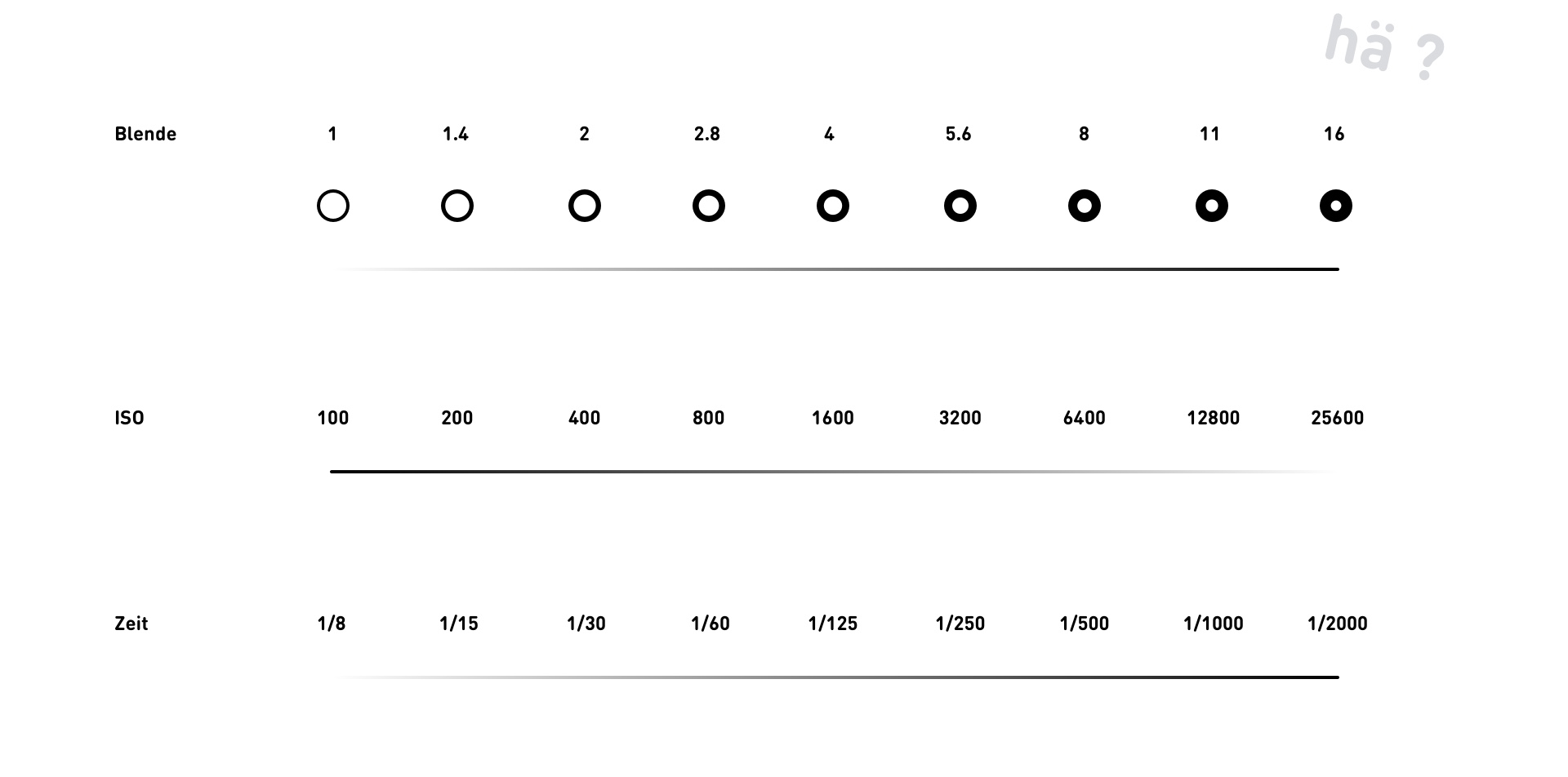

Through haptic and visuell feedback, the camera explains the user how aperture, exposure and ISO are related to each other. By changing the values, the user learns through different senses.

How it works

Besides a touchscreen for quick navigation, a scrollable wheel controls the parameters. The closer the user gets to the optimal value, the harder the wheel gets to scroll. Skipping the wheel changes the value.

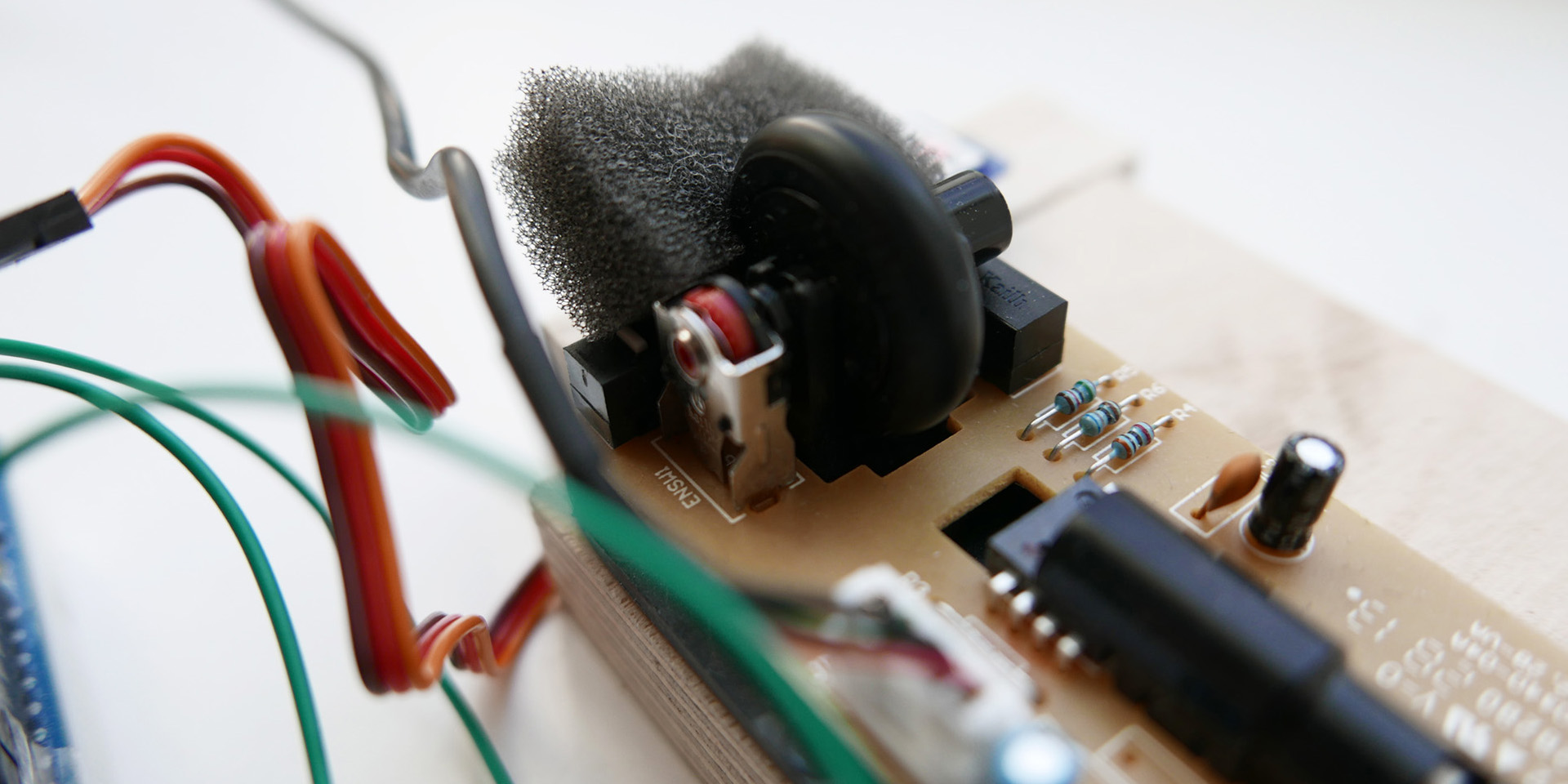

Prototypes

The final result consists of two prototypes. A mobile one, which runs on a iPhone 6, shows the touch interaction and menu navigation. The adjustments of the parameters with haptic feedback and the navigation with hardware omponents are experienceable with the stationary prototype. The stationary one gets input values by an Arduino and sends those values via Breakout.js to framer.js which adjusts the interface.